My third year project at university was to develop and assess a search procedure for non-Boolean satisfiability formulas. It is a generalisation of the Boolean Davis-Putnam procedure, which operates directly on the non-Boolean formula, rather than translating to a Boolean formula and running a Boolean SAT solver on the translation. The project report and source code are available to download.

| Report (PostScript) | Satz (tidied up) | NB-satz (with 'moms' heuristic) |

| Report (ASCII) | Satz (stripped down) | NB-satz (with 'up' heuristic) |

| Suggestion for further work | An example Non-Boolean formula |

I wrote a path planner for a 3D character to negotiate a route through obstacles in a scene. It used the standard A* search algorithm, but instead of discretising the map to a grid and having 4 branches at each node corresponding to the 4 adjacent cells, the choices at each node are the available moves (i.e. which steps the character can take - based on the animations available).

There were also hard constraints on what steps can follow each other (a right step must be followed by a left step) as well as soft constraints (try to avoid alternating sharp right and left turns). I haven't read about this approach to A* before (most articles I have seen construct a series of moves from the result of a grid-based A* search), but I wouldn't be surprised if it had. The disadvantage of this approach compared the grid-based method is that at each node there may be a higher branching factor, making the search space larger (although the search space can be reduced by adjusting the soft constraints and the A* heuristic).

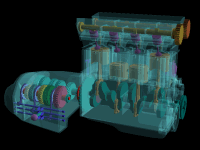

I've previously worked on 3D graphics, programming skeletal animation using a bone structure and vertex weights. I've also written geometry and animation exporter plug-ins for 3D Studio MAX.

A previous job required me to write a statistical part-of-speech tagger. This is a program that takes a sentence as input and generates the tags corresponding to each word's part-of-speech as output (e.g. noun, verb, adjective, adverb). It works by constructing a Markov model from some training data, then traversing this model with the Viterbi algorithm to classify words.

I have developed some low-level computer vision algorithms in VHDL, a language for specifying chip designs. Using FPGAs (programmable hardware), some simple algorithms can be coded to exploit parallelism and repetition of a single task.

I wrote a program that solves Sudoku puzzles one day. The program works in a very similar way to the SAT solver I developed for my third year university project, by picking a square to instantiate and propagating the constraints to its neighbours, backtracking when a contradiction is reached.